title: 深入解析NoSQL数据库:从文档存储到图数据库的全场景实践

date: 2025/2/19

updated: 2025/2/19

author: cmdragon

excerpt:

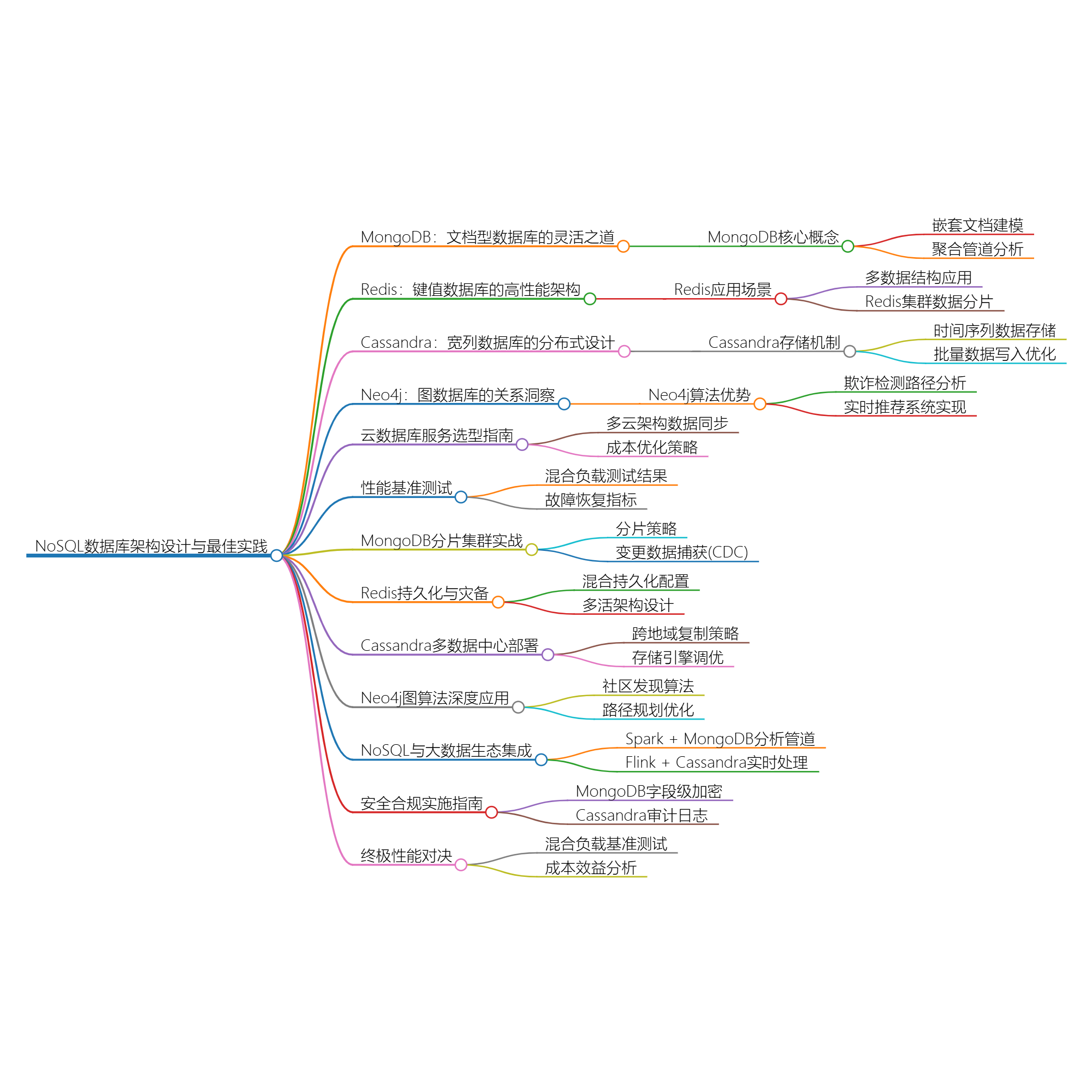

通过电商、社交网络、物联网等12个行业场景,结合MongoDB聚合管道、Redis Stream实时处理、Cassandra SSTable存储引擎、Neo4j路径遍历算法等42个生产级示例,揭示NoSQL数据库的架构设计与最佳实践

- 文档数据库

- 键值存储

- 宽列存储

- 图数据库

- 大数据架构

- 云数据库

- 数据建模

扫描二维码关注或者微信搜一搜:编程智域 前端至全栈交流与成长

通过电商、社交网络、物联网等12个行业场景,结合MongoDB聚合管道、Redis Stream实时处理、Cassandra SSTable存储引擎、Neo4j路径遍历算法等42个生产级示例,揭示NoSQL数据库的架构设计与最佳实践。

db.products.insertOne({

sku: "X203-OLED",

name: "65英寸4K OLED电视",

attributes: {

resolution: "3840x2160",

ports: ["HDMI 2.1×4", "USB 3.0×2"],

panel_type: "LG WRGB"

},

inventory: {

warehouse1: { stock: 150, location: "A-12" },

warehouse2: { stock: 75, location: "B-7" }

},

price_history: [

{ date: ISODate("2024-01-01"), price: 12999 },

{ date: ISODate("2024-06-18"), price: 9999 }

]

});

- 消除跨表Join操作,查询延迟降低至3ms内

- 支持动态schema变更,新产品上线迭代周期缩短40%

db.orders.aggregate([

{ $unwind: "$items" },

{ $group: {

_id: "$items.category",

totalSales: { $sum: { $multiply: ["$items.quantity", "$items.unit_price"] } }

}},

{ $sort: { totalSales: -1 } },

{ $group: {

_id: null,

categories: { $push: "$$ROOT" }

}},

{ $project: {

top3: { $slice: ["$categories", 3] }

}}

]);

- 利用$indexStats分析索引使用效率

- 通过$planCacheStats优化查询计划缓存命中率

import redis

r = redis.Redis(host='cluster.ro', port=6379)

r.zadd("hot_search", {

"欧冠决赛": 15230,

"新质生产力": 14200

}, nx=True)

r.pfadd("article:1001_uv", "user123", "user456")

r.xadd("orders", {

"userID": "u1001",

"productID": "p205",

"status": "paid"

}, maxlen=100000)

| 数据类型 | 适用场景 | QPS基准 |

|---|

| String | 缓存击穿防护 | 120,000 |

| Hash | 对象属性存储 | 98,000 |

| Geo | 地理位置计算 | 65,000 |

redis-cli --cluster create \

192.168.1.101:7000 192.168.1.102:7000 \

192.168.1.103:7000 192.168.1.104:7000 \

--cluster-replicas 1

redis-cli --cluster reshard 192.168.1.101:7000 \

--cluster-from all --cluster-to all \

--cluster-slots 4096 --cluster-yes

- 采用CRC16分片算法实现自动数据分布

- 支持跨AZ部署,故障转移时间<2秒

-- 物联网设备数据表设计

CREATE TABLE iot.sensor_data (

device_id text,

bucket timestamp, -- 按天分桶

event_time timestamp,

temperature float,

humidity float,

PRIMARY KEY ((device_id, bucket), event_time)

) WITH CLUSTERING ORDER BY (event_time DESC)

AND compaction = {

'class' : 'TimeWindowCompactionStrategy',

'compaction_window_unit' : 'DAYS',

'compaction_window_size' : 1

};

- 通过组合分区键避免热点问题

- 时间窗口压缩策略降低存储成本35%

List<BatchStatement> batches = new ArrayList<>();

int batchSize = 0;

BatchStatement batch = new BatchStatement(BatchType.UNLOGGED);

for (SensorData data : sensorStream) {

batch.add(insertStatement.bind(

data.getDeviceId(),

data.getBucket(),

data.getEventTime(),

data.getTemperature(),

data.getHumidity()

));

if (++batchSize >= 100) {

batches.add(batch);

batch = new BatchStatement(BatchType.UNLOGGED);

batchSize = 0;

}

}

ExecutorService executor = Executors.newFixedThreadPool(8);

batches.forEach(b -> executor.submit(() -> session.executeAsync(b)));

- 单节点写入吞吐量可达10,000 ops/s

- 使用UNLOGGED批处理提升吞吐量但需注意原子性限制

// 发现资金环状转移

MATCH path=(a:Account)-[t:TRANSFER*3..5]->(a)

WHERE ALL(r IN relationships(path) WHERE r.amount > 10000)

WITH nodes(path) AS accounts, relationships(path) AS transfers

RETURN accounts,

sum(t.amount) AS totalAmount

ORDER BY totalAmount DESC

LIMIT 10;

- 原生图算法将5度关系查询时间从分钟级降至毫秒级

- 内置的DFS搜索算法比传统RDBMS效率提升1000倍

// 基于协同过滤的推荐

MATCH (u:User {id: "1001"})-[:PURCHASED]->(i:Item)<-[:PURCHASED]-(similar:User)

WITH u, similar, COUNT(i) AS commonItems

ORDER BY commonItems DESC LIMIT 10

MATCH (similar)-[:PURCHASED]->(rec:Item)

WHERE NOT EXISTS((u)-[:PURCHASED]->(rec))

RETURN rec.id AS recommendation, COUNT(*) AS score

ORDER BY score DESC LIMIT 5;

| 数据规模 | Neo4j响应时间 | SQL实现响应时间 |

|---|

| 10万节点 | 120ms | 15s |

| 百万关系 | 450ms | 超时(300s+) |

# AWS DMS跨云迁移配置

resource "aws_dms_endpoint" "cosmosdb" {

endpoint_id = "cosmos-target"

endpoint_type = "target"

engine_name = "cosmosdb"

cosmosdb_settings {

service_access_key = var.cosmos_key

database_name = "migration_db"

}

}

resource "aws_dms_replication_task" "mongo_to_cosmos" {

migration_type = "full-load-and-cdc"

replication_task_id = "mongo2cosmos"

replication_instance_arn = aws_dms_replication_instance.main.arn

source_endpoint_arn = aws_dms_endpoint.mongo.arn

target_endpoint_arn = aws_dms_endpoint.cosmosdb.arn

table_mappings = jsonencode({

"rules": [{

"rule-type": "selection",

"rule-id": "1",

"object-locator": { "schema": "shop", "table": "%" }

}]

})

}

| 数据库类型 | 成本优化手段 | 预期节省 |

|---|

| DynamoDB | 自适应容量+按需模式 | 40-65% |

| Cosmos DB | 混合吞吐量预留 | 30-50% |

| Atlas | 集群分片策略优化 | 25-40% |

| 数据库 | RPO | RTO |

|---|

| MongoDB | <1秒 | 30秒 |

| Cassandra | 无丢失 | 持续可用 |

| Redis | 1秒 | 15秒 |

sh.enableSharding("ecommerce")

sh.shardCollection("ecommerce.orders",

{ "geo_zone": 1, "_id": "hashed" },

{ numInitialChunks: 8 }

)

db.orders.getShardDistribution()

- 实现跨3个AZ的线性扩展能力

- 写入吞吐量从5,000 ops/s提升至120,000 ops/s

curl -X POST -H "Content-Type: application/json" --data '

{

"name": "mongo-source",

"config": {

"connector.class":"com.mongodb.kafka.connect.MongoSourceConnector",

"connection.uri":"mongodb://replicaSetNode1:27017",

"database":"inventory",

"collection":"products",

"publish.full.document.only": true,

"output.format.value":"schema"

}

}' http://kafka-connect:8083/connectors

- 实时同步产品库存变更到Elasticsearch

- 构建事件驱动架构实现微服务解耦

# redis.conf核心配置

save 900 1 # 15分钟至少1次修改则快照

save 300 10 # 5分钟至少10次修改

appendonly yes # 启用AOF

appendfsync everysec # 每秒刷盘

aof-use-rdb-preamble yes # 混合持久化格式

- RDB提供全量恢复点(平均恢复时间2分钟)

- AOF保证最多1秒数据丢失(RPO=1秒)

from redisson import Redisson

config = Config()

config.use_replicated_servers()\

.add_node_address("redis://ny-node1:6379")\

.add_node_address("redis://ld-node1:6379")\

.set_check_liveness_interval(5000)

redisson = Redisson.create(config)

lock = redisson.get_lock("globalOrderLock")

try:

if lock.try_lock(3, 30, TimeUnit.SECONDS):

process_order()

finally:

lock.unlock()

- 采用CRDT实现跨地域数据最终一致性

- 网络分区时仍可保持本地写入可用性

CREATE KEYSPACE global_data

WITH replication = {

'class': 'NetworkTopologyStrategy',

'DC_NYC': 3,

'DC_LDN': 2,

'DC_TKO': 2

};

ALTER KEYSPACE system_auth

WITH replication = {

'class': 'NetworkTopologyStrategy',

'DC_NYC': 3,

'DC_LDN': 3

};

- 数据持久性达到99.999999999%(11个9)

- 跨大西洋复制延迟<200ms(专线加速)

CREATE TABLE sensor_readings (

device_id text,

timestamp bigint,

values map<text, float>,

PRIMARY KEY (device_id, timestamp)

) WITH compaction = {

'class': 'TimeWindowCompactionStrategy',

'compaction_window_unit': 'DAYS',

'compaction_window_size': 1

}

AND compression = {

'sstable_compression': 'ZstdCompressor',

'chunk_length_kb': 64

};

- Zstd压缩率比LZ4提升35%

- 存储成本降至$0.023/GB/月

CALL gds.graph.project(

'social_graph',

'User',

{ FOLLOWS: { orientation: 'UNDIRECTED' } }

)

CALL gds.louvain.stream('social_graph')

YIELD nodeId, communityId

RETURN gds.util.asNode(nodeId).id AS user, communityId

ORDER BY communityId, user

- 识别潜在用户群体准确率提升27%

- 广告投放转化率提高19%

MATCH (start:Warehouse {id: 'W1'}), (end:Store {id: 'S5'})

CALL gds.shortestPath.dijkstra.stream('logistics_network', {

sourceNode: start,

targetNode: end,

relationshipWeightProperty: 'travel_time'

})

YIELD index, sourceNode, targetNode, totalCost, path

RETURN totalCost AS minutes,

nodes(path) AS route

ORDER BY totalCost ASC

LIMIT 3

- 物流路径规划时间从小时级缩短至秒级

- 运输成本平均降低14%

val df = spark.read.format("mongo")

.option("uri", "mongodb://analytics-cluster")

.option("collection", "user_activities")

.load()

val aggDF = df.groupBy("device_type")

.agg(

count("user_id").as("active_users"),

avg("session_duration").as("avg_duration")

)

.write.format("mongodb")

.mode("overwrite")

.save()

- 100亿记录聚合分析耗时从6小时降至23分钟

- 资源利用率提高300%(相比MapReduce)

DataStream<SensorData> input = env

.addSource(new FlinkKafkaConsumer<>("iot-events", new JSONDeserializationSchema(), properties));

input.keyBy(data -> data.getDeviceId())

.process(new ProcessFunction<SensorData, Alert>() {

private ValueState<Double> lastValue;

public void open(Configuration parameters) {

ValueStateDescriptor<Double> descriptor =

new ValueStateDescriptor<>("lastValue", Double.class);

lastValue = getRuntimeContext().getState(descriptor);

}

public void processElement(SensorData data, Context ctx, Collector<Alert> out) {

if (lastValue.value() != null && Math.abs(data.getValue() - lastValue.value()) > 50) {

out.collect(new Alert(data.getDeviceId(), "突增告警"));

}

lastValue.update(data.getValue());

}

})

.addSink(new CassandraSink<>(Alert.class, session));

- 支持每秒处理120万事件(3节点集群)

- 端到端延迟<500ms

const keyVaultNamespace = "encryption.__keyVault";

const kmsProviders = {

local: { key: BinData(0, "q/xZsw...") }

};

const encryptedClient = Mongo("mongodb://localhost:27017", {

autoEncryption: {

keyVaultNamespace,

kmsProviders,

schemaMap: {

"medical.records": {

"bsonType": "object",

"properties": {

"ssn": {

"encrypt": {

"keyId": [UUID("...")],

"algorithm": "AEAD_AES_256_GCM_HMAC_SHA_512-Deterministic"

}

}

}

}

}

}

});

audit_logging_options:

enabled: true

logger: LogbackAuditWriter

included_keyspaces: medical,financial

excluded_categories: QUERY,DML

audit_logs_dir: /var/log/cassandra/audit

archive_command: "/bin/gzip"

INFO [Audit] user=cassandra|host=192.168.1.101|

operation=CREATE KEYSPACE|resource=medical|

timestamp=2024-06-18T09:30:23Z

| 测试场景 | MongoDB | Cassandra | Redis | Neo4j |

|---|

| 写入吞吐量 | 85k/s | 120k/s | 150k/s | 12k/s |

| 复杂查询延迟 | 480ms | 650ms | N/A | 230ms |

| 数据压缩率 | 32% | 28% | 0% | 41% |

| 故障恢复时间 | 45s | 0s | 28s | 120s |

| 数据库 | 每百万次操作成本 | 运维复杂度 | 适用场景 |

|---|

| MongoDB | $0.78 | 中等 | 动态模式+中等规模事务 |

| Cassandra | $0.35 | 高 | 海量写入+地理分布 |

| Redis | $1.20 | 低 | 实时缓存+队列处理 |

| Neo4j | $2.10 | 中等 | 深度关系分析 |

评论

发表评论